Migrating a Million-Image Hosting Site: A Step-by-Step Guide

Background

imgurl.org is an image hosting website operated by xiaoz since December 2017, hereinafter referred to as ImgURL. During its operation, ImgURL underwent several migrations, but the data volume was small at the time, making the process straightforward. As time passed, the data volume grew significantly. As of March 29, 2022, the number of images exceeded 1 million, reaching 1,176,457.

Currently, the server disk I/O pressure is high, primarily due to MySQL read/write operations and image processing tasks (such as cropping and compression). ImgURL is currently hosted on Psychz. Due to the poor mechanical hard drive I/O performance of Psychz, the decision was made to migrate from the Psychz dedicated server to a Kimsufi dedicated server. This article records and shares the migration process.

Website Structure

ImgURL consists of three main components: the application (PHP), the database (MySQL), and external storage. The external storage is divided into four parts:

- Local storage

- Backblaze B2 (Cloud storage, no migration needed)

- FTP

- Self-built MinIO (S3 compatible)

Note: Local storage, FTP, and MinIO data are all hosted on Psychz.

The data volumes for FTP and MinIO are the largest:

- FTP:

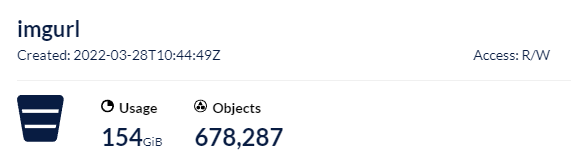

188GB(file count not counted) - MinIO data:

154GB, with 670,000 objects

Database Migration

Although the MySQL database contains millions of rows, the overall database size is relatively small, involving standard import and export operations.

First, export the MySQL database:

mysqldump -uxxx -pxxx imgurl>imgurl.sql

The exported SQL file was only 525MB. Then, use the scp command to copy and migrate the file (omitted here).

Next, import the database:

mysql -u imgurl -p imgurl<imgurl.sql

The database migration step was completed very quickly.

Note: It is not recommended to use the MySQL source command for import, as it prints every line of operation. Printing millions of lines will cause the process to crash.

Migrating Website Data

The rsync command was used to migrate the website data:

rsync -aqpogt -e 'ssh -p xxx' root@IP:/xxx /xxx

Parameter meanings:

-a, --archive: Archive mode, transfers files recursively while preserving all file attributes.-q, --quiet: Quiet mode. This is crucial; without it, a massive amount of file output will be generated when dealing with many files.-p, --perms: Preserve file permissions.-o, --owner: Preserve file owner information.-g, --group: Preserve file group information.-t, --times: Preserve file modification times.-e 'ssh -p xxx': Specifies the SSH port, as a non-standard port (not 22) was used.

Since the website application part was not large, this step did not take long.

FTP Data Migration

The FTP data reached 188GB. While not huge, it contained a large number of small files. The rsync command was used again, but before starting, it is best to use the screen command to keep the task running in the background to avoid interruption due to long execution times.

# Create a new screen session

screen -S xxx

# Use rsync to migrate

rsync -aqpogt -e 'ssh -p xxx' root@IP:/xxx /xxx

The key point here is to keep the task running in the background using screen or similar tools, as there are many small files. The entire process took several hours.

The Most Troublesome Part: MinIO Data Migration

The MinIO instance used by xiaoz is a single-node setup, not a cluster. In a single-node setup, data is stored directly as source files on the disk. A simple way would be to use rsync to sync the source files directly, but this carries unknown risks. Therefore, a safer approach was chosen: using rclone sync for synchronization.

The MinIO information for both servers was pre-configured in rclone (steps omitted), named psychz_s3 and kimsufi_s3. Initially, data from the year 2021 in one bucket was migrated:

rclone sync -P psychz_s3:/imgurl/imgs/2021 kimsufi_s3:/imgurl/imgs/2021

This took 13 hours and generated many errors with the message:

The Content-Md5 you specified did not match what we received.

The number of failed files reached 55,269, meaning 55,269 files failed to migrate due to MD5 checksum mismatches. The cause was likely that non-standard operations were previously used to directly modify MinIO source files (MinIO single-node allows direct access to source files; image source files were compressed, changing their MD5 values). Due to the large number of files and the poor I/O of Psychz, rclone spent a significant amount of time scanning.

Subsequently, the remaining data was migrated with an optimized command:

rclone sync --s3-upload-cutoff 0 --tpslimit 10 --ignore-checksum --size-only -P psychz_s3:/imgurl/imgs/2022/03 kimsufi_s3:/imgurl/imgs/2022/03

--s3-upload-cutoff 0

For small objects not uploaded as multipart uploads, rclone uses the ETag header as the MD5 checksum. However, for objects uploaded as multipart or using server-side encryption (SSE-AWS or SSE-C), the ETag header is no longer the MD5 sum of the data. Therefore, rclone adds an additional metadata

X-Amz-Meta-Md5chksum, which is a base64-encoded MD5 hash (in the same format asContent-MD5).

For large objects, calculating this hash can take some time, so you can disable adding this hash using

--s3-disable-checksum. This means these objects will not have an MD5 checksum.

Please note that reading the object requires an additional HEAD request because the metadata is not returned in the object list.

After reading the official description, the exact meaning of --s3-upload-cutoff was still unclear.

--tpslimit 10

(Note: The official description provided in the source text for this parameter appears to be a copy-paste error regarding

--s3-upload-cutoff. The actual function of--tpslimitis to limit the number of transactions per second.)

--ignore-checksum: Ignores hash checksums (MD5). This is very useful for large numbers of small files but may reduce reliability.--size-only: Checks only the file size.

Monitoring showed that the optimized command significantly improved the performance of rclone sync compared to the original, and system load dropped sharply (likely due to reduced scanning).

For 154GB of data and 670,000 objects, synchronizing MinIO data using rclone sync took over 30 hours. There are likely many other optimization parameters in rclone; interested readers can explore the official rclone documentation.

Summary

As of March 30, 2022, https://imgurl.org/ has been successfully migrated from Psychz to Kimsufi. The overall difficulty was not high, but the rclone sync synchronization of MinIO data took a considerable amount of time. The key takeaways are:

- MySQL Import: Do not recommend using the

sourcecommand. Instead, use:mysql -u imgurl -p imgurl<imgurl.sql - Long-running Tasks: If a task is expected to take a long time, always use

screenor similar commands to keep it running in the background to prevent interruption if the window closes. - rclone Optimization:

rclone syncefficiency can be improved with specific parameters, such as ignoring hash checksums (--ignore-checksum). - Local File Migration: For Linux local file migration,

rsyncis recommended for its simplicity and efficiency.